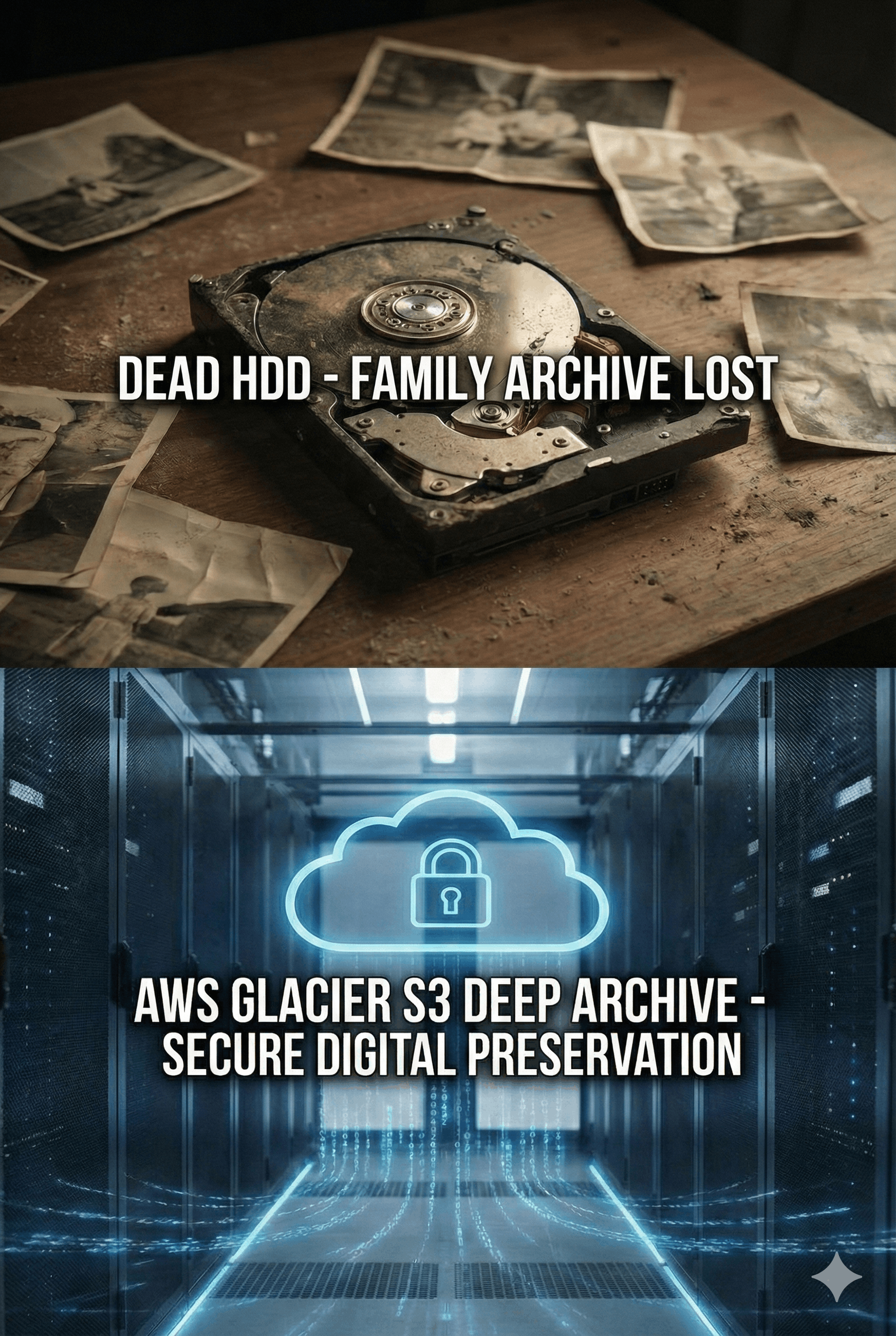

Saving Data for Disaster Recovery with Amazon S3 Glacier Deep Archive

TL;DR Create an AWS account, S3 bucket in a cheap region, and a dedicated IAM user with minimal S3 related permissions Install & configure AWS CLI on the machine that will do the upload Stage 1 — Archive: Run glacier_archive_split.sh to compress source folders into ~100GB .tar.gz chunks across two transit disks (uses pigz for fast parallel compression) Stage 2 — Upload: Run glacier_upload.sh to upload archives to S3 Glacier Deep Archive via resumable multipart upload (100MB parts, crash-safe) Cost: ~$1/month per TB stored; uploads are free; full retrieval of 1TB costs ~$96 (mostly data transfer out) Safety: original data is never touched, every upload is verified, resume survives power outages (loses at most ~100MB) This post has CLAUDE.md file so you can update setup according to your needs via Claude Code. ...