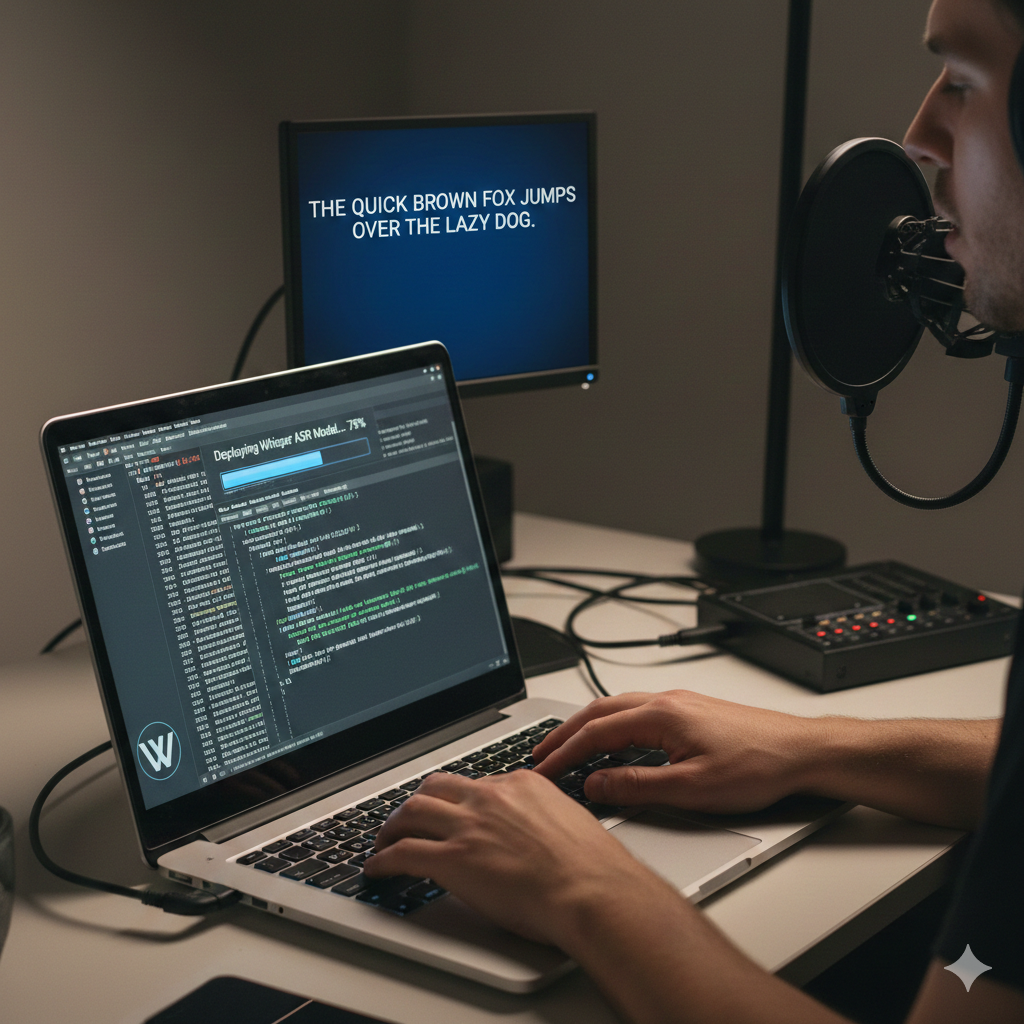

Setup Whisper on MacOS locally

git clone https://github.com/ggerganov/whisper.cpp

cd whisper.cpp

bash ./models/download-ggml-model.sh large-v3

python -m venv whisper-env

source whisper-env/bin/activate

pip3 install ane_transformers openai-whisper coremltools

./models/generate-coreml-model.sh large-v3

brew install cmake

WHISPER_COREML=1 make -j

nano ~/.zshrc

source ~/.zshrc

Add the function below to ~/.zshrc

function transcribe_ru() {

if [ -z "$1" ]; then

echo "Usage: transcribe_ru <audio_file> [model]"

echo "Default model: large-v3-turbo"

return 1

fi

local input_file="$1"

local filename=$(basename "$input_file")

local stem="${filename%.*}"

local ext="${filename##*.}"

local model="${2:-large-v3}"

local whisper_path="$HOME/Documents/projects/ai-whisper/whisper.cpp"

local whisper_bin="$whisper_path/build/bin/whisper-cli"

# Check if model exists

if [ ! -f "$whisper_path/models/ggml-${model}.bin" ]; then

echo "Error: Model 'ggml-${model}.bin' not found in $whisper_path/models/"

return 1

fi

# Only convert if not WAV

local audio_file="$input_file"

if [ "$ext" != "wav" ] && [ "$ext" != "WAV" ]; then

echo "Converting ${ext} to 16kHz mono WAV..."

audio_file="/tmp/${stem}_16k.wav"

ffmpeg -y -i "$input_file" -ar 16000 -ac 1 -c:a pcm_s16le "$audio_file" > /dev/null 2>&1

if [ ! -f "$audio_file" ]; then

echo "Error: Audio conversion failed."

return 1

fi

else

echo "Using existing WAV file (assuming 16kHz mono)..."

fi

echo "Transcribing (Language: Russian, Model: $model, VAD: ON)..."

"$whisper_bin" \

-m "$whisper_path/models/ggml-${model}.bin" \

-f "$audio_file" \

-l ru \

-otxt \

-of "${stem}"

echo "✅ Done! Output: ${stem}.txt"

# Cleanup temp file if created

if [ "$audio_file" != "$input_file" ]; then

rm "$audio_file"

fi

}

If you need specific version of Xcode look for it on https://xcodereleases.com/

Extract audio from video

ffmpeg -i input.mkv -ar 16000 -ac 1 -c:a pcm_s16le output.wav